Humane’s Ai Pin, which debuts today, is the most hyped piece of hardware in recent memory.

With $240 million in funding from luminaries including Salesforce CEO Marc Benioff and OpenAI CEO Sam Altman, the device attaches to your lapel with magnets, listens to your requests like Siri, and will search the internet, translate your speech, or project an interface right onto your hand.

Revealed after five years of stealth development in a dramatic TED talk last May, Humane cofounder Imran Chaudhri waxed poetic about the need for “technology that disappears.” Then in October, we got our first full look at the hardware during Coperni’s Paris Fashion Week show—on a garment donned by Naomi Campbell.

Last week, I flew to San Francisco for a meeting ahead of the Ai Pin’s launch. After watching a scripted demo, complete with carefully choreographed jokes, I asked to try the device for myself. At that moment, Chaudhri and his cofounder and spouse, Bethany Bongiorno, glanced at their PR handlers. That wouldn’t be possible, the handler said, as Humane planned media hands-on testing post-launch.

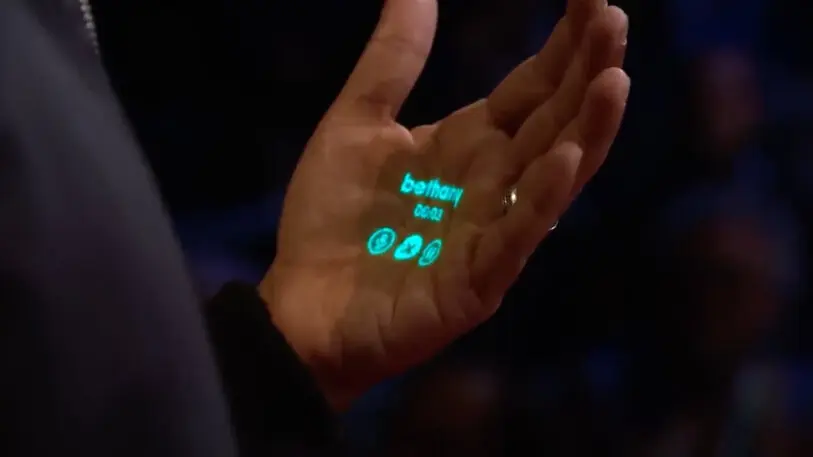

The Ai Pin is like a tiny smartphone that sits on your lapel instead of in your pocket. It will cost you $699, plus a monthly subscription fee of $24 that will give you a dedicated phone number and unlimited talk, text, data, and cloud storage. The Pin has a camera and an internet connection. Its biggest twist is that you can hold up your hand and it will project an interface onto it. For example, you can see the album you’re listening to on Tidal, with buttons to play and skip. By flicking your wrist and pinching your fingers—on the same hand that serves as the display—you can control the GUI on your skin, toggling through buttons you click with a pinch.

However, the device’s “Laser Ink Display” projector, which Humane says is the smallest and brightest ever built, is near-illegible when reading its WarGames-green text, or worse, looking at photos on your hand.

That leaves its spoken AI interface, which connects to ChatGPT through Humane’s proprietary onboard AI, as the main way you interact with it. But seeing the demonstration, it honestly didn’t seem much more advanced than using Siri on your iPhone or Apple Watch. A world with no screens might sound lovely, until you actually consider the ramifications of using a modern smartphone solely by talking to it.

Chaudhri, a 21-year veteran of Apple, may be the first mover in this new category, but Humane will soon have plenty of company. AI is shaping up to drive the greatest hardware battle since the smartphone. In recent months, a handful of competitors have also started to disclose details on their particular vision for what a physical AI assistant could be. Those include two wearable microphones: Avi Schiffmann’s Tab and Dan Siroker’s Rewind Pendant, the latter of which has $33 million in funding. Meanwhile, OpenAI’s Sam Altman appears to be hedging his bets with LoveFrom’s Jony Ive, as the two are reportedly in talks to raise $1 billion from SoftBank around an “iPhone of artificial intelligence.”

But just because you are the first out of the gate or the best-funded company doesn’t guarantee success. An explosion of smartphones with all sorts of unique UX paradigms—keyboards, sliders, trackballs—existed for years before the iPhone’s touchscreen made them go extinct. Like any paradigm shift in computing, the revolution will be driven not by the fastest tech, but the most usable and essential design.

Humane’s hardware

Standing in Humane’s studio in San Francisco’s SoMa district, the hardware is laid out on tables in neat rows, giving the presentation a full Apple Store vibe.

My first impression, as Chaudhri tours me through the various stages involved in milling the Ai Pin’s aluminum frame, is that it reminds me a lot of the higher-end hardware produced by Samsung. A custom 12-layer, two-sided motherboard fits inside, somehow squeezing a smartphone’s worth of chips, along with a camera, depth sensor, and the aforementioned laser projector, into a thick matchbook. I consider how solid it is in my hand—which gives it a premium feel—before wondering how it can possibly drape on a lighter-weight blouse or dress.

A four-hour battery lives on the device. But to actually affix it to your shirt, you’ll attach a “battery booster,” which sticks onto the back of the Ai Pin through surprisingly powerful magnets. The fabric of your garment sits in between the Pin and the battery like a clothing sandwich (and the power is actually transmitted wirelessly through your fabric). To know it’s secure and actually stuck in place, the Pin makes a small chirp—a graceful assurance that it’s not going to go plummeting from your shirt to the ground.

In a pleasant UX touch, setting up the device won’t involve downloading apps or porting your contacts over from your phone, I’m told. Instead, after ordering the device, you’ll be able to link your various accounts on the cloud. The Ai Pin will arrive with all of this set up for you. To log in the first time, you scan what looks like a QR code crossed with a Tarot card. When you receive the Ai Pin, you scan it, enter your passcode, and it’s ready to go. You can set the device to require you to reenter your pin whenever you swap the battery, or at various intervals of time.

The Pin ships with a handful of accessories, and there are even more available for purchase. Humane offers two options of charging pad (one in plastic that comes with the Pin and one optional in undulating silver). Additionally, an egg-shaped charging case can refill the Ai Pin on the go. It can also top off just one spare battery while you wear the Pin, but due to the empty space, it rattles around in the case like Yahtzee dice.

Then there are shields, which wrap around the Ai Pin for cosmetic customization, including nudes in three skin tones, and Nike-inspired neon pastels. As I examine all these objects on the table, the team shows off Ai Pins in a dozen or more finishes like 22 karat gold. “We see this as something that we will be releasing multiple colorways up, just [like] sneaker drops,” Chaudhri says. This entire accessory and future hardware reveal is the one time in the last five years that I can say that Humane shared a lot, a whole lot, more than I expected. But looking at tables of accessories—multiple docks, chargers, backers—I mostly wondered if they amounted to a pretty distraction from the Pin’s more fundamental design issue.

Humane’s big promise

Humane developed this hardware specifically to create new forms of user experience that don’t require putting a screen in between you and the world itself. While Chaudhri attributed the idea to WSJ columnist Walt Mossberg in his introductory TED talk, this idea of “calm” or “ubiquitous computing” was first proposed by the late UX legend Mark Weiser, a computer scientist and chief technology officer at Xerox PARC in the 1980s and ’90s. Even before the rise of smartphones, he wanted to address society’s rising addiction to screens. Weiser was brilliant, and reading his philosophical research papers can still give me chills 30-plus years after he wrote them. But Weiser lacked modern technologies, ranging from Wi-Fi to AI, to bring most of his visions into reality.

“If we get this right, AI will unlock a world of possibility for all of us,” Chaudhri said at TED. Before my demo, what Humane meant by “possibility” was vague. Afterward, it was disappointing. Ken Kocienda, the product architect at Humane and a former principal engineer of iPhone software who worked on the design team, started with a demonstration of things the Ai Pin can do when responding to your voice.

The demo included use cases we’ve seen before: looking up the weather, playing a song, checking Kocienda’s schedule, and writing a haiku about his morning commute. To “settle bar bets,” with a tap, he asked the Ai Pin, “Who was president in the year 1900?” Later, he prompted it to speak in the style of Shakespeare.

We’ve lived with “AI assistants” for years now—software and devices that can already tell us the weather and answer trivia. I appreciated the AI Pin’s ability to summarize a list of text messages into a few insights. But that was the most functional difference between the Ai Pin and existing products I could discern from my 30-minute demo.

The real promise of a wearable AI is that it’s there to quietly collect knowledge about your life and turn it into action. But to protect your privacy, the AI Pin doesn’t listen for wake words like “Siri” or “Alexa,” which feels self-defeating for a system that you control 99% of the time with your voice. Everything you do on the device requires tapping the AI Pin and talking. Even on these simple queries running inside Humane’s controlled environment, lengthy response times made it feel anywhere from a little slow to so slow that Kocienda had to ask his question a second time, only to trip over the Pin’s answer.

That’s in part due to the fact that while these modern AIs are eloquent, they’re often much slower than human speech, let alone a Google search. This lag is one reason the AI Pin’s core interaction model of talking was challenged from the start. (Other reasons are more intrinsic. Humans use their hands for everything from drawing to gesturing to texting; consider how often you choose to text when we could voice-to-text.)

For the moments you don’t want to talk, you can pull up the screen. By planting your elbow into your ribs and holding your hand up very still—a gesture the Humane team has grown good at, but feels quite unnatural to me—the spoken audio transforms into text on your hand. This transitional UX was silky smooth in person. The problem is, that projector feels like little more than a proof of concept. While I’m told the projected display automatically scales and rotates, large print text appeared fuzzy to read, and the dense passage of what looked to be a Wikipedia article was illegible. The Pin’s display is fixed in one spot in the air, meaning it isn’t built to follow your hand if you shift. After the demo, I took a photo with the team and Kocienda projected it back onto his hand. My best comparison is the original Game Boy camera.

Despite Chaudhri’s big promise, the Ai Pin doesn’t circumvent the screen—it simply moves it to your palm, just like if you were holding a phone. Where was all the magical stuff? I wondered. The stuff where, because the AI Pin is so overtly planted on our person, the rest of its demands could disappear?

A brighter spot in the demo was the Pin’s AI translation. Holding two fingers to the device, which will translate your speech through a crisp onboard speaker, seemed like a pleasant approach for conversing in another language (if just a bit slower than you might want). You can do this with an app today, but I highlight the moment because it was a rare case in Humane’s demos where it felt like their efforts to eliminate screens might actually enhance face-to-face social connection.

At TED, Chaudhri demonstrated how the AI Pin could track his diet by holding up a chocolate bar and asking, “Can I eat this?” His AI Pin recommended, “A milky bar contains cocoa butter. Given your intolerance, you might want to avoid it.” To demonstrate this feature to me, senior product engineer Yanir Nulman holds up a plastic apple to show the camera. He asks, “Can I eat this?” But the Pin notes he hasn’t been logging his food consumption today, so it can’t say.

Food logging won’t be ready for launch, but this interaction demonstrates the futility of delivering an “invisible interface” that still requires constant manual input. This seems to be the fundamental point Humane, and Chaudhri, have promised for years but failed to deliver, at least in this first version of their much-hyped product.

In practice, the AI Pin reminded me of an Echo Dot on your chest. Ironically, Humane plans to partner with Amazon for easy product reordering, just like Alexa, and visual product search for the real world. “I can never [bring myself to] sit down in front of a machine and buy deodorant,” says Chaudhri, explaining the benefits of having Amazon on the Pin. This isn’t the future of AI, though; it’s the same old voice-assistant capitalism we’ve been pitched for a decade, but now it’s been moved from your kitchen counter to your person.

By the time the presentation wraps, I cannot believe how few features I am shown from the AI Pin’s world-facing camera, beyond taking pictures. The two-finger tap looks handy for grabbing candid street photos, which an AI will crop for you since you can’t actually see your field of view. When you do record or take photos or videos, a Trust Light glows on the device. Unfortunately, this light is often outshined by another component on the device: a gold filter that covers the Ai Pin’s laser. The filter is prone to glimmer when it catches ambient light, making me believe it was on several times when it wasn’t.

But my real question about the camera is: Where is the rest of its AI functionality—not built with Amazon, but for everything else you want to do but shop?

It just so happens that recently I’ve been navigating my way through Seoul with the assistance of Dot—an AI companion designed by another former member of the Apple design team, Jason Yuan, that remembers my interests and dietary preferences, searches the internet for me, translates signs, suggests itineraries, and saves me from squinting at maps and apps. No, it doesn’t speak aloud. But texting it is a slicker modality by far than the oddly constrictive touch-speak-wait-listen UX behind the AI Pin. And if I could have my camera one step closer to Dot? I bet I could do even more with it.

Humane’s issue in a nutshell isn’t that a wearable assistant is inherently a flawed idea, it’s that Chaudhri’s product doesn’t yet solve the problem he has diagnosed and set out to mitigate: that removing a screen will solve our dependence on technology. He has created a phone without a screen, yes, but the functionality we’ve lost in the process exceeds anything we’ve gained. Having seen the AI Pin in action, a project that’s raised hundreds of millions of dollars for its half a decade in development, it appears Humane hasn’t unlocked the potential of AI of today, let alone tomorrow, nor has it fundamentally solved any significant problems we have with technology. It’s just moved them two feet, from your pocket to your shirt.

Recognize your brand’s excellence by applying to this year’s Brands That Matter Awards before the final deadline, June 7.

Sign up for Brands That Matter notifications here.