Google announced a set of initiatives Wednesday aimed at creating a more equitable product experience for people across the skin-tone spectrum.

At its Google I/O conference, the company unveiled a new, 10-point skin tone scale—that is, a set of 10 representative human skin tones that people can match to their own, or to skin tones shown in photographs—developed with Ellis Monk, an associate professor of sociology at Harvard University known for his research into skin tone and colorism. In a Google-led study, a diverse set of research participants found the new Monk Skin Tone Scale to be more representative of a greater number of skin tones, and the company is releasing the set of colors and information on its research so that others can use the scale or suggest improvements.

Google envisions that the scale can provide a standardized way for people in the tech industry to build and test products across the range of human skin tones, providing a uniform way to discuss which ranges of colors are or are not well served by a particular product.

“This really is about creating an industry conversation,” says Tulsee Doshi, head of product for responsible AI at Google.

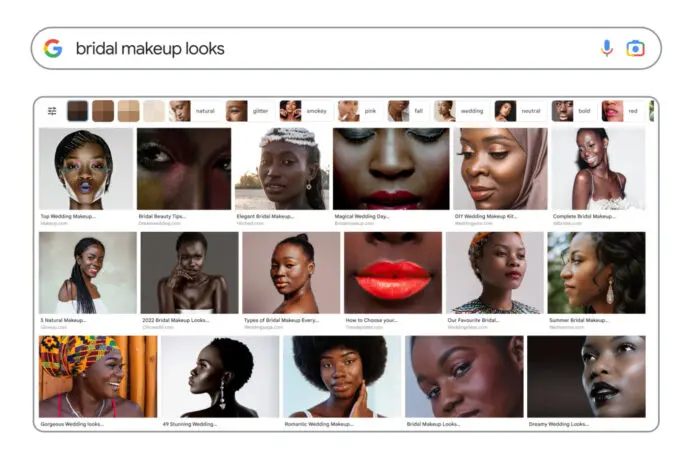

As Google pushes to move its tools away from that era, the company will likely annotate images based on which skin tones from the Monk scale are depicted in them so that it can deliberately train and test AI and other technology on a diverse set of skin colors. In general, Google is looking to ensure that search results for skin tone-neutral queries like “cute baby” aren’t biased toward particular colors, Doshi says.

The company is also rolling out new filters for Google Photos as part of its existing Real Tone system, which is designed to help generate high-quality photos for a wide array of skin tones.

Having a standardized skin tone scale will also help people within the company and, potentially, the industry quickly communicate about skin color-related issues, Doshi says. Google is also interested in developing ways that publishers can annotate content to indicate which skin tones are present where it’s relevant, similar to how recipe publishers can now add metadata useful to search engines and their users looking for cooking instructions with certain features, she says.

“Doing that kind of work is not something that will be user-visible necessarily, but it is something that will just make our products better,” she says.

Recognize your brand’s excellence by applying to this year’s Brands That Matter Awards before the early-rate deadline, May 3.