In 2011, Taylor University, a small liberal arts college in Upland, Indiana, began to look for a new way to maximize recruitment. Specifically, they needed to sell students on applying and enrolling, in part to help with tuition revenue goals. That led to a contract with software giant Salesforce, which builds automated systems designed to boost student recruitment. The school now feeds data on prospective students–from hometown and household income to intended areas of study and other data points–into Salesforce’s Education Cloud, which helps admissions officers zero in on the type of applicants they feel are most likely to enroll.

“If we find a population of student in northwest Chicago that looks like the ideal student for us, maybe there is another population center in Montana that looks just like that population,” says Nathan Baker, Taylor University’s director of recruitment & analytics.

“We’re also tracking the student’s engagement with us,” he says. “So, just because a student population looks ideal, if the student is not engaged with us at all during the process, we have to take that into account.”

Algorithms aren’t just helping to orchestrate our digital experiences but increasingly entering sectors that were historically the province of humans—hiring, lending, and flagging suspicious individuals at national borders. Now a growing number of companies, including Salesforce, are selling or building AI-backed systems that schools can use to track potential and current students, much in the way companies keep track of customers. Increasingly, the software is helping admissions officers decide who gets in.

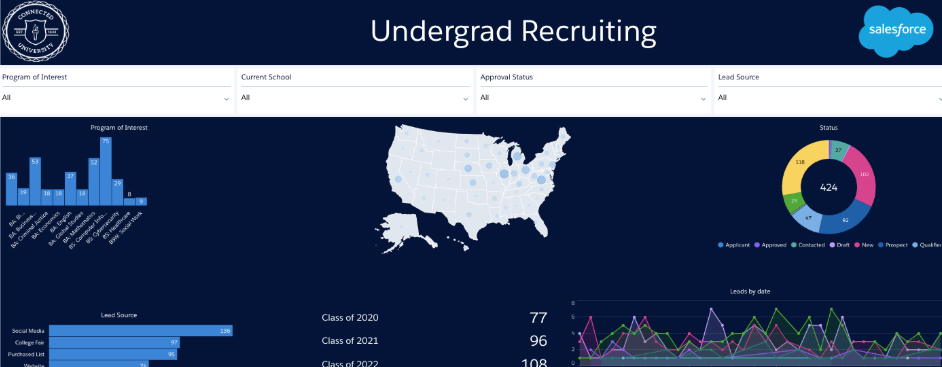

The tech companies behind admissions software say algorithms can improve the success of school recruitment efforts and cut costs. At Taylor University, which Salesforce touts as a “success story,” the admissions department says it saw improvements in recruitment and revenues after adopting the Education Cloud: In Fall 2015, the school welcomed its largest ever incoming freshman class. Taylor now uses the software to predict future student outcomes and make decisions about distributing financial aid and scholarships.

But the software isn’t just about streamlining the daunting task of pouring over thousands of applications, says Salesforce. AI is also being pitched as a way to make the admissions system more equitable, by helping schools reduce unseen human biases that can impact admissions decisions.

“When you’ve got a tool that can help make [bias] explicit, you can really see factors that are going into a decision or recommendation,” says Kathy Baxter, architect of ethical practice at Salesforce. “It makes it clearer to see if decisions are being made based purely on this one factor or this other factor.” (Salesforce says it now has more than 4,000 customers using its Education Cloud software, but declined to disclose which schools are using it for admissions specifically.)

On a basic level, AI-backed software could immediately score an applicant against a set of factors found to be a signifier of success based on past applicants, explains Brian Knotts, chief architect at Ellucian, which builds software for higher education.

“An example could be the location of the student as compared to the location of the school,” he says. Admission officers would use this data-driven assessment to augment their decision-making process. “As each class graduates, the algorithms are periodically retrained, and future students only benefit from smarter and smarter admissions decisions,” says Knotts.

To reduce individual bias, committees look at the applicants and usually decide by a vote or some other type of consensus. But unfairness can still creep into the process. For instance, as recent research into recruitment practices has shown, universities tend to market directly to desirable candidates and pay more visits to students in affluent areas, especially to wealthy, predominately white students at out-of-state high schools, since these students are likely to yield more tuition revenue.

Kira Talent, a Canadian startup that sells a cloud-based admissions assessment platform to over 300 schools, identifies nine types of common human bias in university admissions, including groupthink and racial and gender biases. But some of the most harmful biases impacting admissions are unrelated to race, religion, gender, or other stereotypes, according to a company presentation. Instead, biases grow “situationally” and often unexpectedly from how admissions officers review applicants, including an inconsistent number of reviewers and reviewer exhaustion.

Other kinds of more subtle biases can creep in through admissions policies. As part of an ongoing lawsuit brought by Asian-American students against Harvard University, the school’s Admissions Office revealed its use of an interviewer handbook that emphasizes applicant personality in a passage entitled “The Search for Distinguishing Excellences.” Distinguishing excellence, according to the handbook, includes “outstanding capacity for leadership,” which could disadvantage hardworking introverts, and “unusually appealing personal qualities,” which sounds like fertile ground for human bias to enter into the mind of an interviewer.

Baxter argues that software can help identify the biases that creep in to human-based admissions processes. Algorithms can do systematic analyses of the data points that admissions officers consider in each application. For instance, the Salesforce software used by Taylor University includes Protected Fields, a feature that displays pop-up alerts in order to identify biases that may emerge in the data, like last names that might reveal an applicant’s race.

“If an admissions officer wants to avoid race or gender bias in the model they’re building to predict which applicants should be admitted, but they include zip code in their data set not knowing that it correlates to race, they will be alerted to the racial bias this can cause,” says Baxter.

Molly McCracken, marketing manager at Kira Talent, says that AI could also help eliminate situations where an admissions officer might know an applicant, automatically flagging a personal connection that exists between two parties. From there, a human review of the relationship could be undertaken by admissions officials.

McCracken proposes a hypothetical scenario where an admissions official who had been on the debate club in high school might identify with an applicant who was on the debate club as well. “But, if you train an algorithm to say the debate club is equal to x is equal to y, then you don’t have to bring that kind of [personal] background and experience [into the process],” McCracken says. Still, she cautions, “you also need that human perspective to be able to qualify these experiences.”

Kira’s set of bias-fighting tools are designed to reduce the impact one person’s bias can have during human review. A Reviewer Analytics feature aims to ensure that admissions officers are rating applicants consistently and fairly: by calculating the average rating for each reviewer across all applications, colleges can identify outliers who are scoring applicants with too much or too little rigor. To counter groupthink, which can give more weight to the loudest voice in the room, the software combines feedback from multiple reviewers without each reviewer seeing their colleagues’ ratings and notes and produces an overall average score for each applicant’s responses.

Ryan Rucker, a project manager at Kira Talent, says the company is currently in the research and development phases of adding AI-backed software to the company’s admissions tools. Eventually, that could also help schools conduct deeper background checks into an applicant’s personal history. That could, for instance, help prevent the kind of cheating seen in the recent university admissions scandal, where wealthy applicants’ parents paid for preferential admission by having the applicants pose as athletes.

“As we move further into this place where we’re using more machine learning and AI-enabled solutions, we’re going to get better at checking for certain things, like whether someone is actually a member of the crew team in high school,” says Rucker, referring to the recent university admissions scandal. “This information is typically publicly available on a website, which we could crawl for that,” he adds. “Of course, if we want to get into the area of data privacy, that’s a totally different topic.”

Related: After rapid growth, Zuckerberg-backed school program faces scrutiny over effectiveness, data privacy

Letting machine bias in?

However, as artificial intelligence experts have cautioned, systems that aim to reduce bias through AI could be complicated by AI itself. Automated systems will only be as good as the underlying data, says Rashida Richardson, director of policy research at AI Now Institute, a think tank at New York University that studies machine bias and algorithmic accountability. And since admissions are embedded with many subjective judgments, Richardson believes attempting to automate it can result in “embedding and possibly concealing these subjective decisions,” quietly replicating the problems that these systems purport to address.

Automation has raised similar alarms in sensitive domains like policing, criminal justice, and child welfare. If future admissions decisions are based on past decision data, Richardson warns of creating an unintended feedback loop, limiting a school’s demographic makeup, harming disadvantaged students, and putting a school out of sync with changing demographics.

“There is a societal automation bias—people will assume that because it is a technical system it is fairer than the status quo,” says Richardson. “Research on the integration of matching algorithms in medical schools and how these systems helped facilitate and distort discriminatory practices and bias is proof that these concerns are real and highly likely.”

Richardson says that companies like Ellucian, Salesforce, and Kira Talent fail to acknowledge on their websites that there are significant educational equity issues built into the admissions process.

“It is unclear to me how you standardize and automate a process that is not only based on subjective judgments but also requires a deep understanding of context,” she says.

Richardson notes, for instance, that many high schools participate in grade inflation and heterodox grading systems. While admissions officers may be aware of this and account for it in the admissions process, an AI system may not.

“Similarly, an AI system may not appreciate the additional challenges a lower income or first-generation students face, compared to a more affluent legacy,” Richardson cautions. “It’s possible an AI system or automated process may exacerbate existing biases.”

Software could also lead to a further class divide in the admissions process, Richardson worries. Just as people try to game the existing human-driven admissions process, applicants might try to do the same if the factors used by automated systems are known. Higher resource groups, like the wealthy and connected, might find ways to enhance applications for the most favorable outcome. Richardson says that AI Now has already seen this happen with more basic algorithms that assign students to K-12 schools.

“Families that have time to make complicated spreadsheets to optimize their choices will likely have a better chance at matching with their top schools,” says Richardson, “whereas less resourced families may not have the time or enough information to do the same.”

Baxter says that machine bias safeguards are built into Salesforce’s Einstein AI, an AI-based technology that underpins the company’s software. A feature called Predictive Factors lets admissions users give feedback to the AI model, allowing them to identify if a biasing factor was included in the results. And Education Cloud’s Model Metrics feature helps gauge the performance of AI models, says Baxter, allowing users to better understand any previously unforeseen outcomes that could be harmful. “Our models are regularly being updated and learning from that ongoing use, so it’s not a static thing,” Baxter says.

To ensure that data isn’t biased from the get-go, Ellucian is selecting appropriate features, data types, and algorithms, and plans to vet them through an advisory board populated with technologists, data scientists, and other AI experts. “That’s part of the reason why we’ve taken a long time,” says Knotts. “We want to make sure that we spend as much time on this as we have on the technology.”

AI Now’s Richardson says any advisory board without domain expertise, like educators, administrators, and education scholars and advocates—specifically, ones with experience in discrimination, educational equity, and segregation—would be insufficient.

Richardson is also concerned that companies offering automated admissions solutions might not understand just how subjective the process is. For instance, admissions officers already weigh elite boarding school students higher than a valedictorian from an under-sourced, segregated school, creating a socioeconomic bias. She doubts AI could resolve this issue, to say nothing of approaching it with enough sophistication to “understand both the sensitivity that should be given to socioeconomic and racial analysis.”

Predicting student success is also a delicate challenge. Some students are late bloomers. Others excel in high school, but for one reason or another fall short in and after college. So many different variables—seen and unseen, measurable and not—factor into a student’s performance.

“It falls within this trend within tech to think everything is predictable and that there is a predetermined notion on success,” she says. “There are many variables that have been traditionally used to predict student success that have been shown to be inaccurate or embedded with bias (e.g. standardized test scores). At the same time, there is a range of unforeseeable issues that can affect individual or collective student success.”

These traditional metrics of success can also be biased against marginalized students and do not take into account institutional biases, like high school counselors that ignore or fail to serve students of color.

Related: NYC students take aim at segregation by hacking an algorithm

At some schools, even some automation in admissions is a no-no. “Unlike many other colleges and universities, we do not use AI or other automated systems in our decisioning,” says Greer Davis, associate director of marketing and communications at the University of Wisconsin-Madison. “All applications and all supporting material are read by multiple counselors using a holistic approach, and we do not have or use any minimums, ranges, or formulas.”

UC-Berkeley is also resisting machine-driven admissions. Janet Gilmore, UC-Berkeley’s senior director of communications & public affairs, says the university has no automated processes, and that the applicant’s complete file is evaluated by trained readers. Its admissions process website states that “race, ethnicity, gender, and religion are excluded from the criteria,” and that the school uses a holistic review process that looks at both academic (course difficulty, standardized test scores) and nonacademic factors. In the latter case, it could be personal qualities such as character and intellectual independence, or activities like volunteer service and leadership in community organizations.

Richardson does, however, see a place for automation in reducing the workload in processing admissions. Software could, for instance, help eliminate duplicate documents or flag missing documents from applicants.

The newest admissions and recruitment software aims to do far more than flag incomplete files. But Knotts, of Ellucian, insists the aim is not to fully automate the decisions themselves, at least not yet. “We don’t want to make computer decisions with these applicants,” he says. “And I don’t think the education sector wants that either.”

Recognize your brand’s excellence by applying to this year’s Brands That Matter Awards before the early-rate deadline, May 3.