If you’ve ever tried out a dieting app, you might have filled out a questionnaire asking you about your body type, weight, exercise, and eating habits, and possibly even medical information, like whether you have diabetes. Ostensibly that data is used to inform what kind of diet the app suggests, but new research reveals diet companies may be using it in other ways. According to London-based non-profit Privacy International, diet apps are sometimes sharing this data with third-party marketers and not protecting it securely. The report also raises questions around whether U.S. laws adequately protect online health data that isn’t hosted by a medical entity.

Researchers at the organization filled out questionnaires for the diet apps Noom, BetterMe, and VShred several times, each time entering slightly different data to see if it rendered a different recommendation. The researchers found that regardless of the data entered, the results tended to be the same. For example, the researchers entered a variety of starting weights and goal weights into BetterMe. Each time, the suggested plan was identical, promising that the person could lose nine pounds after the first week of the program and that 83% of “similar people” lost more than 17 pounds on their platform. (In a response, BetterMe says that the data is used to determined a daily calorie intake and whether individuals have dietary preferences, like vegetarian).

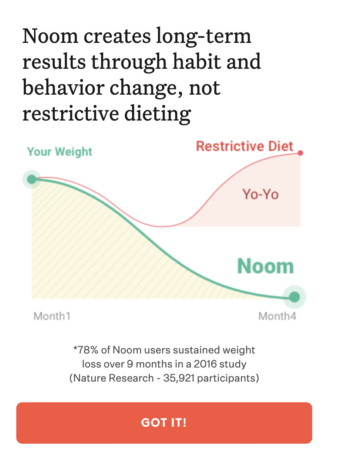

The same was true for VShred, which asked for gender, age, height, weight, exercise habits, and workout goals. While the company did provide people with a custom set of “daily macros” or allowed calories, carbohydrates, fats, and protein per day, its fitness and nutrition recommendations were the same books and mobile videos regardless of the information entered. Noom, by contrast, gives clients a timeline within which they will lose weight and then asks for additional personal information as a way of predicting the shortest amount of time to meet a weight goal. In total, Privacy International estimates that Noom asks at least 50 questions about a person’s mental health, physical health habits, and medical profile.

So what happens to this information? Among its other findings, Privacy International found that information inputted into VShred’s website appeared in its URL, making it accessible by third party ad platforms like Google Analytics, Facebook, and Yandex. On BetterMe, only information about gender seemed to show up in its URL data. Researchers also found that Noom actively shared all of its consumer data with a company called Fullstory, a data analytics firm.

In a response to Privacy International, BetterMe cited its privacy policy. VShred did not respond to a request for comment by press time, but in its privacy policy it discloses that it collects and shares information with third parties, including geolocation. In a request for comment, a Noom spokesperson said: “Noom takes its data protection obligations seriously and has developed a robust data protection compliance program to comply with evolving legal requirements.” It adds that data is only shared with service providers and is collected to enhance the user experience.

While these companies are collecting health data (and in some cases medical information), that data is not protected under the Health Insurance Portability and Accountability Act (HIPAA). There isn’t transparency into whether this data is being well protected or used in ad targeting, says Privacy International senior researcher Eva Blum-Dumontet.

Blum-Dumontet also raises concern over who dieting companies may be targeting. A nonprofit called Anorexia and Bulimia Care has found that “eating disorder” and other similar words appear among suggested keywords for ad targeting. “Those ads can be really triggering,” says Blum-Dumontet. It can also lead people with disordered eating habits to engage in content they should otherwise stay away from, she says.

In Europe, online data is protected by the General Data Protection Regulation, but data privacy laws in the United States are more limited and state dependent. Even still, in Europe many companies can use “legitimate interest,” a legal cover that allows companies to share consumer data based on a person’s potential interests in a product or service. Under GDPR, companies also must obtain direct consent to collect cookies, or data generated from web browsing on a particular site. But in both the U.S. and Europe, companies are fairly well protected from lawsuits simply by clearly stating in their privacy policies that they collect and share data.

In an October 2020 lawsuit, both Noom and Fullstory were accused of illegal wiretapping, eavesdropping, and invasion of privacy for using technology to track what visitors do on the Noom website. In April, a judge dismissed the case on the grounds that the claim did not legally pass muster. In its defense, Fullstory notes that Noom’s embedded script for collecting information is only temporarily downloaded onto user’s device and is active only while that person is connected to the website and is deactivated or deleted afterward. In its privacy policy, however, the company states, “Noom may use User’s information that Noom collects about User to provide User with marketing materials or relevant advertising, promotions and recommendations from Noom or our business partners.”

Despite the outcomes, Blum-Dumontet says, the lawsuit is telling. “I think [the lawsuit] really shows the genuine concerns of users over this kind of behavior,” she says. “This behavior is concerning and raising a legal challenge is still completely on the table.”

Recognize your brand’s excellence by applying to this year’s Brands That Matter Awards before the early-rate deadline, May 3.