An estimated half a million Americans with hearing impairments use American Sign Language (ASL) every day. But ASL has one shortcoming: While it allows people who are deaf to communicate with one another, it doesn’t enable dialogue between the hearing and the nonhearing.

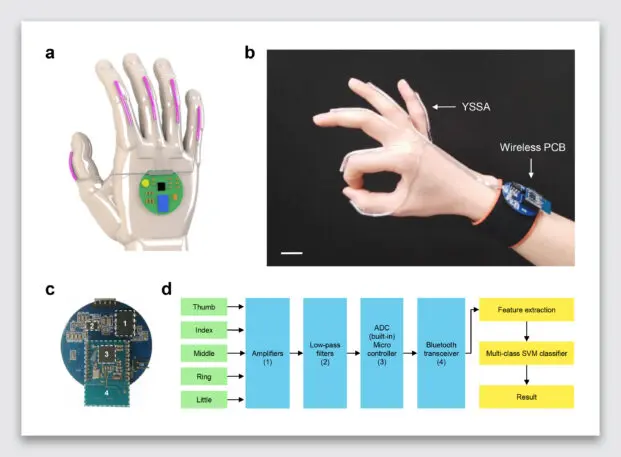

Jun Chen, the assistant professor at UCLA’s Department of Engineering who led the research, tells us he was inspired to create the glove after being frustrated when trying to talk to a friend with hearing impairment. He looked at other solutions that had been proposed to translate ASL and found them imperfect. Vision recognition systems need the right lighting to make fingers legible. Another proposed solution, which can track the electrical impulses through your skin to read signs, requires the precise placement of sensors to get proper measurements.

The project doesn’t address a main criticism of ASL translation tools, that Deafness is a culture unto itself that should not have to augment behavior to respond to the hearing community.

So what’s next for the project? Waiting. Chen suggests it could be another three to five years of “polish” before the technology is ready to be mass-produced, over which time the glove could learn how to translate sign languages beyond English, too (which has been another shortcoming of earlier ASL translation projects). Hopefully the project moves forward. Because it’s not just a simple idea to scale ASL to more people; it’s a demonstration of what’s possible with wearable technology when we think beyond another smartwatch.

Recognize your brand’s excellence by applying to this year’s Brands That Matter Awards before the early-rate deadline, May 3.